Real-Time Business Solutions Offered By Drones

For many enterprises, drones function as a valuable asset. Drone technology continues to grow exponentially offering convenience, efficiency, and speed to adapt swiftly to dynamic

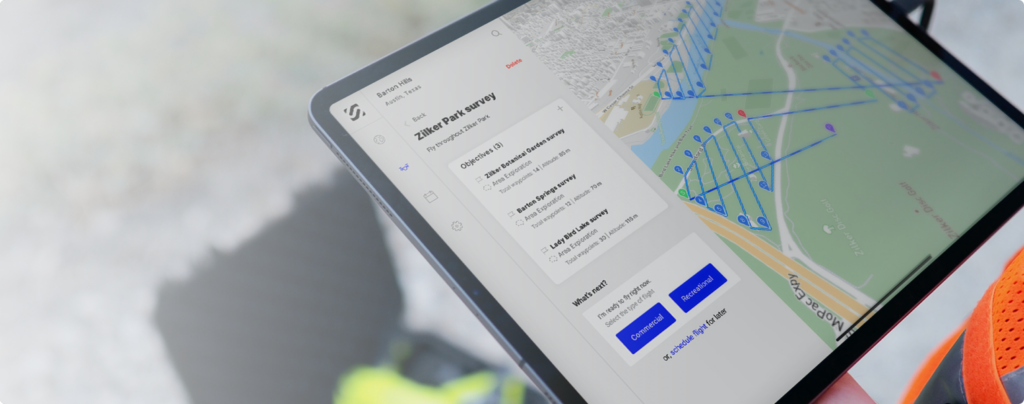

Drone Automation Made Easy with SkyGrid Flight Control

Drones have been the subject of interest in recent times for the convenience, flexibility, and efficiency they offer. More than that, their potential to possibly

All-in-One Drone App for Pilots and Enterprises

In case you missed it, we launched our all-in-one drone app, SkyGrid Flight Control, on the Apple App Store for iPhone and iPad users across the

Drone Automation Made Easy for Commercial Pilots

Drones are disrupting a wide variety of industries and innovating outdated business models. Just in the last few months, drones delivered test kits and disinfected

Automate Drone Flight Planning with SkyGrid Flight Control

No matter your mission, whether to inspect a pipeline, respond to an emergency, or secure a perimeter, the drone flight planning process shouldn’t be so

SkyGrid Flight Control: An All-in-One Drone App

In case you missed it, SkyGrid just launched the first all-in-one drone app. What does that mean exactly? It means pilots can manage their entire